Don’t be “AI-shy” (part 2)

|

Reader, In Part 1, you saw how a bleeding-edge tech assessment for a QA Engineer looks in 2026. Now you're going to learn why this is something you can expect to see more of. "The share of new code relying on AI rose from 5% in 2022 to 29% in early 2025"

And the trend is expected to continue growing. Sure, there's skepticism about how much AI can speed up high-quality code generation, but AI's ability to do so has only increased. I'm not a believer that we'll achieve AGI (or that we need to for disastrous impacts on the workforce and environment to come about), but I do think you should expect to see more companies assessing an Engineering candidate's ability to use AI to generate code. It's quickly becoming table stakes for meeting new productivity expectations. And it's becoming a normal part of the job (for better or worse). How do you assess "competence with using AI tools"?Easy. You have the candidate use AI tools. Yeah, I know. Scaaaary. What if they cheat?

What if I hire someone who can't write code without AI?

What if we don't have interviewers who know how to use AI tools?

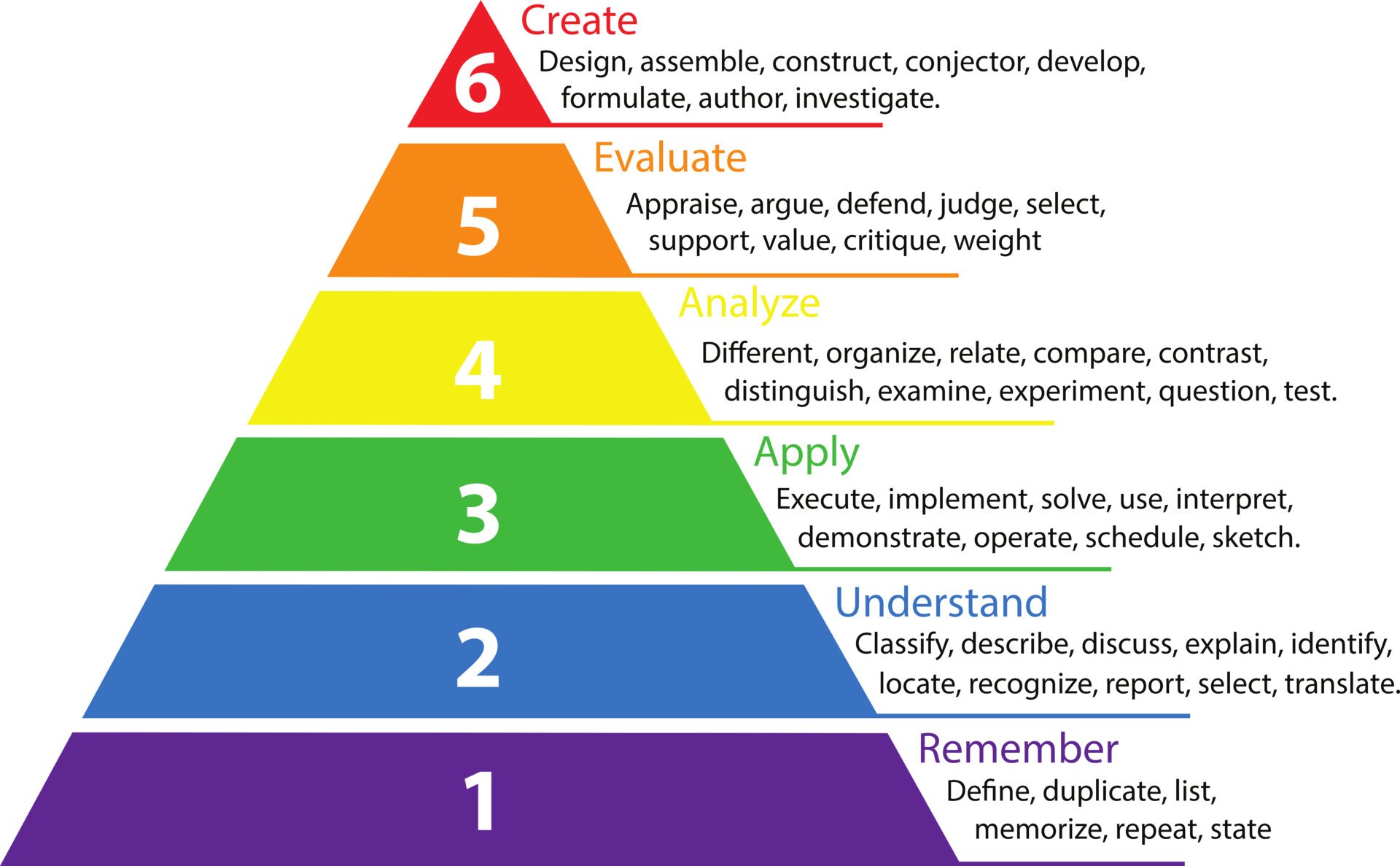

Change sucks, but changing with the times keeps you in the loop with top talent and believe it or not, there are ways to design your tests to solidify those answers. If you are technical enough to spot them. So if you're asking those questions, it's time to change things up. Let's take a look at how traditional assessment rubrics compare to AI assessment rubrics for a second here: What if they cheat? What does "cheating" mean to you? What does it mean to your hiring managers? You're not testing their ability to remember stuff. You're going to see how much they think and critique while they use AI tools. That's a completely different ball game than checking for correctness. Of course, you'll want to have someone in the room to evaluate the code quality, but what you're most interested in is how much the candidate is paying attention to what is being generated, and what opinions they have about the AI's output as they're working. It's harder to cheat at having good taste than it is to cheat at remembering the correct answers. What if they're incompetent without AI? You'll figure this out when they go to prune the AI fluff. It's possible to ask AI to "just clean it up", but it takes longer to do that than manually editing the specific things you dislike about AI's output. Besides, the point about "having good taste" in the earlier point about cheating applies here too. You can be reassured that the candidate knows how to write good code if they are aware of what bad code looks like and what to replace it with. What if the interviewer doesn't have XP with AI tools? Well...time to get some! Or bring in someone from Engineering who does have this experience. Or worst case scenario, demand that the Engineer on the call does some learning on YouTube before the call. There's no excuse nowadays for not having this experience. It's been 2 years since Cursor got popular. The assumption is that if your team is hiring someone who needs to know how to use agentic coding tools, the team is already using them. If you're really without a properly experienced auditor, find one externally or hire one. Let's look at the rubric againFirst of all, it's not a perfect rubric. I had AI whip one up to save time writing this newsletter. For example, the note about "writing complex Xpaths and CSS selectors" is not a good use of AI, since those selector strategies are typically garbage/last resorts for a QA Engineer. That said...what can we learn? Traditional tech assessments tend to see how skilled a candidate is at generating "the right answer". AI-augmented tech assessments go further. In my teacher education studies, we learned about a framework called Bloom's Taxonomy, where Remember is the most primitive thinking skill in the hierarchy.

You can think of traditional assessments as living more in the "basement" of the pyramid, while AI-augmented assessments allow you spend more time testing higher-order thinking skills. With AI handling the minutia of generating the correct syntax for the task at hand, you can focus on how the candidate evaluates the code in the context of the overall system they're building. You can see their opinions about the system and interview them about the tradeoffs of their architectural choices. In essence, your conversations become far more interesting with fewer "hard-and-fast" rules about what's "correct". Your team can hire based on a candidate's architectural judgement and cultural alignment, because the AI handles the syntactic burden that used to consume the interview. What this means for youIs your team already assessing engineering candidates this way? If not, why not? The goal is to align your technical evaluations to streamline your interview process and help guide decisions. Many companies have AI initiatives and are trying to select and adopt this technology so it might be time to have this conversation with your team, because top talent is easier to spot when you can have more quality conversations in your interviews. It's much harder to know who you're talking to if the code itself takes up most of the interview time. And with the current market the way it is, being able to cut your vetting time down will be key. -Steven |

The Better Vetter Letter

Helping tech recruiters vet client requirements and job candidates for technical roles by blending 20+ years of Engineering & Recruiting experience.

Reader, PART TWO · WHAT YOU SHOULD DO How to get real information and use it Here’s the shift: stop treating the recruiter call as a formality and start treating it as an intelligence operation. You have more leverage than you think if you ask the right questions. okay, maybe not like this The goal is to figure out what the actual deal is: what the company thinks they need vs. what they realistically need whether you’re a real fit how to talk to the specific manager you’re about to meet Ask...

Reader, PART ONE · RECRUITER CONFESSIONS Here’s what’s actually happening on our end Let me be honest with you about something the industry doesn’t like to admit out loud. By the time a job gets posted and you apply, a lot of things that should have been figured out, haven’t been. Roles change mid-process all the time. Budget shifts. Leadership realizes they don’t actually agree on what success looks like. Someone internally gets considered after the requisition is already open. Or there are...

Reader, On Monday we covered who to contact and when. You did the work, found the right recruiter and team. Now what? Let’s talk about the message itself. I read a lot of outreach. And I'll be direct: most of it sounds the same. Not because the people sending it are bad candidates, but because they're following an outdated professional template that signals "I didn't really think about this." I’m guilty of it myself. I have looked back and read outreach for sales activity I’ve done and...